Yesterday was a decent day. I worked on our automation scripts that we’ll use to update our Serum indexer and front ends. I haven’t figured out quite how to fully automate it yet, but now I’m generating JSON and TypeScript files directly instead of using print statements to output them on the screen. I’ve got two more functions left to do and I’ll be happy. I’ll just need to copy the files into the respective repos and push them up to make the changes stick.

There are a bunch of hoops that I have to go through though. The JSON feed that we’re using doesn’t have all the markets that we want, so I have prelude of sorts that I’m appending the dynamically generated content to. On top of that I have to modify the dynamic content as well to correct a perplexing design decision — the same symbol for two items. I’m trying not to get caught up in too many optimizations at this point, but I will need to prepare for some changes in the base currency that’s being used. That will be a challenge since it’s so far outside of what the reference implementation will do.

Right now the current markets are all tied to USDC, but Atlas Co. is planning on creating ATLAS ones as well. We currently have the markets identified only by the NFT assets, but when the ATLAS markets go live we’ll need to distinguish between the two. I already know how to update the tickers in our Serum indexer, I just need to update the Redis key rename script that I used previously and I can gangload the changes. Updating the market itself can be done relatively easy once the feed has been updated, but the question is how to make the best user experience to distinguish between the two?

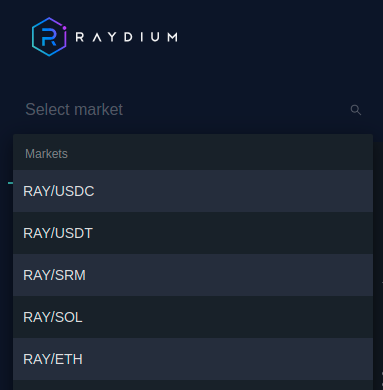

The current market list is already really crowded. We’ve got at least 78 items already in the list. Doubling everything just be too much. Splitting the market lists with some sort of top-level selector makes more sense, but I’m already dreading the program logic required to do such a thing.

I also need to update the DEX code to make sure that we can even take fees in another base currency. It’s currently coded for USDC and USDT, so I need to add some parameters for ATLAS as the base currency. It’s shouldn’t be too bad, Raydium currently has several markets for their token, so I know it’s not impossible.

I have a lot of things to add the exchange project Kaban board, that’s for sure. I’m not sure how much I’ll prioritize these things as I have a couple other Solana-related projects that I’ll be looking at. One is to really delve into the RPC API and start looking at accounts and transactions to see if I can recreate history. The other is to explore the Metaplex mono repo and figure out how Candy Machine and Fair Launch works.

I’m really getting pulled in now.